Demos

A Review of Automatic Drum Transcription

In Western popular music, drums and percussion are an important means to emphasize and shape the rhythm, often defining the musical style. If computers were able to analyze the drum part in recorded music, it would enable a variety of rhythm-related music processing tasks. Especially the detection and classification of drum sound events by computational methods is considered to be an important and challenging research problem in the broader field of Music Information Retrieval. Over the last two decades, several authors have attempted to tackle this problem under the umbrella term Automatic Drum Transcription (ADT). This paper presents a comprehensive review of ADT research, including a thorough discussion of the task-specific challenges, categorization of existing techniques, and evaluation of several state-of-the-art systems. To provide more insights on the practice of ADT systems, we focus on two families of ADT techniques, namely methods based on Nonnegative Matrix Factorization and Recurrent Neural Networks. We explain the methods' technical details and drum-specific variations and evaluate these approaches on publicly available datasets with a consistent experimental setup. Finally, the open issues and under-explored areas in ADT research are identified and discussed, providing future directions in this field.

Unifying Local and Global Methods for Harmonic-Percussive Source Separation

This paper addresses the separation of drums from music recordings, a task closely related to harmonic-percussive source separation (HPSS). In previous works, two families of algorithms have been prominently applied to this problem. They are based either on local filtering and diffusion schemes, or on global low-rank models. In this paper, we propose to combine the advantages of both paradigms. To this end, we use a local approach based on Kernel Additive Modeling (KAM) to extract an initial guess for the percussive and harmonic parts. Subsequently, we use Non-Negative Matrix Factorization (NMF) with soft activation constraints as a global approach to jointly enhance both estimates. As an additional contribution, we introduce a novel constraint for enhancing percussive activations and a scheme for estimating the percussive weight of NMF components. Throughout the paper, we use a real-world music example to illustrate the ideas behind our proposed method. Finally, we report promising BSS Eval results achieved with the publicly available test corpora ENST-Drums and QUASI, which contain isolated drum and accompaniment tracks.

A Swingogram Representation for Tracking Micro-Rhythmic Variation in Jazz Performances

A typical micro-rhythmic trait of jazz performances is their “swing feel.” According to several studies, uneven eighth notes contribute decisively to this perceived quality. In this paper we analyze the swing ratio (beat-upbeat ratio) implied by the drummer on the ride cymbal. Extending previous work, we propose a new method for semi-automatic swing ratio estimation based on pattern recognition in onset sequences. As a main contribution, we introduce a novel time-swing ratio representation called swingogram, which locally captures information related to the swing ratio over time. Based on this representation, we propose to track the most plausible trajectory of the swing ratio of the ride cymbal pattern over time via dynamic programming. We show how this kind of visualization leads to interesting insights into the peculiarities of jazz musicians improvising together.

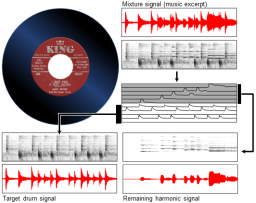

Reverse Engineering the Amen Break - Score-informed Separation and Restoration applied to Drum Recordings

In view of applying Music Information Retrieval (MIR) techniques for music production, our goal is to extract high-quality component signals from drum solo recordings (so-called breakbeats). Specifically, we employ audio source separation techniques to recover sound events from the drum sound mixture that correspond to the individual drum strokes. Our separation approach is based on an informed variant of Non- Negative Matrix Factor Deconvolution (NMFD) that has been proposed and applied to drum transcription and separation in earlier works. In this article, we systematically study the suitability of NMFD and the impact of audio- and score-based side information in the context of drum separation. In the case of imperfect decompositions, we observe different cross-talk artifacts appearing during the attack and the decay segment of the extracted drum sounds. Based on these findings, we propose and evaluate two extensions to the core technique. The first extension is based on applying a cascaded NMFD decomposition while retaining selected side information. The second extension is a time-frequency selective restoration approach using a dictionary of single note drum sounds. For all our experiments, we use a publicly available data set consisting of multi-track drum recordings and corresponding annotations that allows us to evaluate the source separation quality. Using this test set, we show that our proposed methods can lead to an improved quality of the component signals.

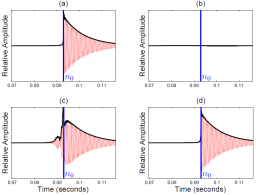

Towards Transient Restoration in Score-informed Audio Decomposition

Our goal is to improve the perceptual quality of transient signal components extracted in the context of music source separation. Many state-of-the-art techniques are based on applying a suitable decomposition to the magnitude of the Short-Time Fourier Transform (STFT) of the mixture signal. The phase information required for the reconstruction of individual component signals is usually taken from the mixture, resulting in a complex-valued, modified STFT (MSTFT). There are different methods for reconstructing a time-domain signal whose STFT approximates the target MSTFT. Due to phase inconsistencies, these reconstructed signals are likely to contain artifacts such as pre-echos preceding transient components. In this paper, we propose a simple, yet effective extension of the iterative signal reconstruction procedure by Griffin and Lim to remedy this problem. In a first experiment, under laboratory conditions, we show that our method considerably attenuates pre-echos while still showing similar convergence properties as the original approach. A second, more realistic experiment involving score-informed audio decomposition shows that the proposed method still yields improvements, although to a lesser extent, under non-idealized conditions.

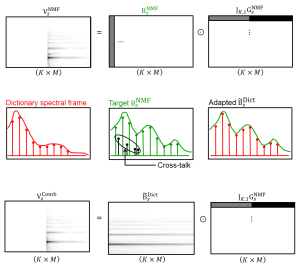

A Separate and Restore Approach to Score-Informed Music Decomposition

Our goal is to improve the perceptual quality of signal components extracted in the context of music source separation. Specifically, we focus on decomposing polyphonic, mono-timbral piano recordings into the sound events that correspond to the individual notes of the underlying composition. Our separation technique is based on score-informed Non-Negative Matrix Factorization (NMF) that has been proposed in earlier works as an effective means to enforce a musically meaningful decomposition of piano music. However, the method still has certain shortcomings for complex mixtures where the tones strongly overlap in frequency and time. As the main contribution of this paper, we propose a restoration stage based on refined Wiener filter masks to score-informed NMF. Our idea is to introduce notewise soft masks created from a dictionary of perfectly isolated piano tones, which are then adapted to match the timbre of the target components. A basic experiment with mixtures of piano tones shows improvements of our novel reconstruction method with regard to perceptually motivated separation quality metrics. A second experiment with more complex piano recordings shows that further investigations into the concept are necessary for real-world applicability.